Journal

One API Key to Rule Them All: Why I Switched to OpenRouter

In January 2026, I had four different API provider dashboards open. Anthropic for Claude. OpenAI for GPT. Google for Gemini. Perplexity for search. Each one had its own API key, its own billing, its own credit balance, and its own verification process. Some needed phone verification. Some needed organization setup. Some held my credit card for days before releasing it.

I was building multiple projects at the same time - an AI agent for my client George (OpenClaw), my own AI discoverability audit tool, and a handful of hobby projects on the side. Each project needed the flexibility to use different models for different tasks. That meant managing separate API keys per project, per provider.

It was chaos. And then I found OpenRouter.

What Is OpenRouter?

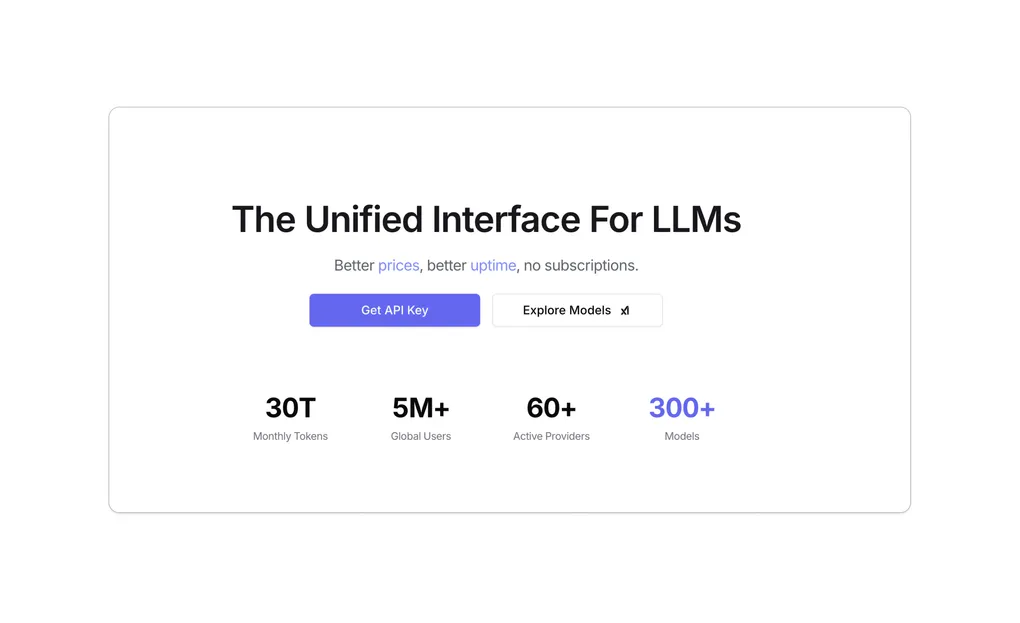

OpenRouter is an API gateway. You get one API key, one credit balance, and access to over 340 models from every major provider - all through a single endpoint.

That’s it. One key. One balance. Every model.

Here’s what’s available right now:

| Provider | Models |

|---|---|

| Anthropic | Claude Opus 4.6, 4.5, 4, 4.1 / Sonnet 4.6, 4.5, 4, 3.7 / Haiku 4.5, 3.5, 3 |

| OpenAI | GPT-4.1, 4.1-mini, 4.1-nano / GPT-4o, 4o-mini / o3, o3-pro, o4-mini |

| Gemini 3.1 Pro, 3 Pro, 2.5 Pro / Gemini 3 Flash, 2.5 Flash, 2.5 Flash Lite | |

| DeepSeek | DeepSeek V3.2, V3.1 / DeepSeek R1 |

| Meta | Llama 4 Maverick, 4 Scout / Llama 3.3 70B |

| Qwen | Qwen3 Coder, Qwen3.5 397B |

| xAI | Grok 4, Grok 4.1 Fast, Grok 3 |

| Mistral | Mistral Large, Devstral, Codestral |

| Others | Cohere, Perplexity, Amazon Nova, MiniMax, Nvidia Nemotron, and many more |

When I first saw this list, I didn’t believe it. Every model I was paying for separately, accessible through one key.

What My January Looked Like

Here’s the honest version. Every project had a .env file that looked like this:

# January 2026 - the dark ages

ANTHROPIC_API_KEY=sk-ant-...

OPENAI_API_KEY=sk-...

GOOGLE_API_KEY=AIza...

PERPLEXITY_API_KEY=pplx-...Four keys. Four dashboards. Four billing statements. And every time I wanted to try a different model, I had to go sign up for that provider, set up billing, get verified, generate a key, update my .env, and change my API client code.

For OpenClaw and the AI discoverability tool, I needed to switch between models constantly. Some tasks needed web search. Some needed fast cheap inference. Some needed deep reasoning. Each swap meant touching multiple files.

What My February Looks Like

# February 2026 - one key to rule them all

OPENROUTER_API_KEY=sk-or-...One key. One balance. And switching models is just changing a string:

// Switch from Claude to Gemini Flash - one line change

const response = await fetch("https://openrouter.ai/api/v1/chat/completions", {

method: "POST",

headers: {

"Authorization": `Bearer ${process.env.OPENROUTER_API_KEY}`,

"Content-Type": "application/json"

},

body: JSON.stringify({

model: "google/gemini-3-flash-preview", // swap this line, that's it

messages: [{ role: "user", content: "..." }]

})

});Want Claude instead? Change the model string to "anthropic/claude-sonnet-4.6". Want Perplexity with live web search? Change it to "perplexity/sonar-pro". Same endpoint, same key, same code structure.

No new accounts. No new billing. No verification. Just change the model name and go.

Web Search Models - This Was a Big Deal for Me

For both the AI discoverability tool and OpenClaw, web search is critical. I need models that can search the internet in real time and return results with sources.

OpenRouter gives you access to models with built-in web search:

| Model | Type |

|---|---|

openai/gpt-4o | Web search via parameters |

openai/gpt-4o-mini | Web search via parameters |

openai/gpt-4o-search-preview | Dedicated search model |

openai/gpt-4o-mini-search-preview | Dedicated search model |

perplexity/sonar | Always searches the web |

perplexity/sonar-pro | Always searches the web |

perplexity/sonar-pro-search | Agentic multi-step search |

perplexity/sonar-reasoning-pro | Search + reasoning |

perplexity/sonar-deep-research | Multi-step deep research |

openrouter/auto | Routes to best model (inherits search) |

The other major models - Claude, Gemini, DeepSeek, Llama, Mistral, Grok, Qwen - all support tool use, which means you can pass them a custom search tool. But they don’t have built-in native web search like the OpenAI and Perplexity models above.

This matters because in the AI discoverability tool, the deep web search discovery phase requires searching multiple terms with a client’s name to find their online presence and check it against their master NAP (Name, Address, Phone). Having access to all these search-capable models through one API made it possible to test which one performs best for each specific task.

What I’ve Learned About Which Models to Use Where

After a month of real production use across multiple projects, here’s what I’ve found:

Gemini Flash 3 is the best value for web search tasks. When I need to search the web for a client’s online presence - checking directory listings, social profiles, review sites - Gemini Flash 3 handles it well and it’s fast and cheap. For high-volume discovery tasks where you’re making dozens of search calls per audit, cost matters. Gemini Flash 3 hits the sweet spot.

Perplexity is still king for cited research. When I need results with actual source URLs that I can verify and present to a client, Perplexity wins. The citations matter. In both the AI discoverability tool and George’s OpenClaw agent, there are moments where I need to say “here’s what we found, and here’s the source.” Perplexity does that natively.

Claude Sonnet 4.6 and Gemini 3 Pro are overkill for many tasks. They’re powerful models, no question. But for AI discoverability tasks like scanning online presence and generating audit results, they’re expensive and the quality difference doesn’t justify the cost. Save these for tasks that genuinely need deep reasoning or complex content generation.

The ability to test this quickly is the real advantage. Before OpenRouter, testing a new model meant setting up a new provider account. Now I just change a string and compare results. That speed of experimentation is where the real value lives.

The Business Model - Do They Cost More?

This was my first question too. Here’s how it actually works:

Per-token pricing is the same as going direct. OpenRouter doesn’t mark up the inference costs. If Claude Sonnet costs X per million tokens on Anthropic’s dashboard, it costs X on OpenRouter.

The catch is a 5.5% credit purchase fee. When you top up your balance via card, you pay 5.5% on top. So $100 of credits costs you $105.50. Crypto payments have a slightly lower 5% fee.

There’s also a Bring Your Own Key option. If you connect your own provider API keys to OpenRouter, the first 1 million requests per month are free. After that, a 5% fee kicks in.

So yes, it costs slightly more than going direct. About 5.5%. But here’s my take - for the projects I’m working on, that 5.5% is worth it many times over. The time I save not managing four separate billing dashboards, the speed of switching models, the single credit balance that I can actually track - all of that is worth more than 5.5%.

At enterprise scale with massive volume, going direct makes more sense. You can negotiate volume discounts directly with providers. But for indie builders, consultants, and small teams shipping multiple AI-powered projects? OpenRouter is the obvious choice.

29 Free Models

One more thing I didn’t expect - OpenRouter offers 29 free models. Rate-limited (20 requests per minute, 200 per day), but free. Models like DeepSeek R1, Llama 3.3 70B, Mistral Small 3.1, and Qwen3 are available at zero cost.

For prototyping and testing, this is huge. I can spin up a new project, test my architecture with a free model, and only switch to a paid model when I’m ready for production quality.

The Honest Downsides

I said I’d be honest, so here are the things to know:

The 5.5% fee adds up at scale. If you’re spending thousands per month on API calls, that percentage becomes real money. At that point, evaluate whether direct provider relationships and volume discounts make more sense.

There’s a small latency overhead. Every request goes through OpenRouter’s proxy, adding roughly 50-70ms. For chat applications and batch processing, this is negligible. For latency-critical real-time applications, it’s worth measuring.

You’re adding a dependency. If OpenRouter has downtime, all your providers go down simultaneously. With direct keys, a problem at Anthropic doesn’t affect your OpenAI calls. With OpenRouter, it could.

For my use cases, none of these are dealbreakers. But they’re worth knowing.

The Bottom Line

I went from managing four API provider accounts, four sets of credentials, four billing dashboards, and a mess of .env files - to one key, one balance, and the ability to swap between 340+ models by changing a single string.

For anyone building AI-powered projects - whether you’re a solo developer, a consultant building client tools, or a small team shipping fast - OpenRouter removes friction that you didn’t realize was slowing you down.

I’m not affiliated with OpenRouter. I’m just a builder who got tired of the chaos and found something that works.

One API key. Every model. That’s it.