Journal

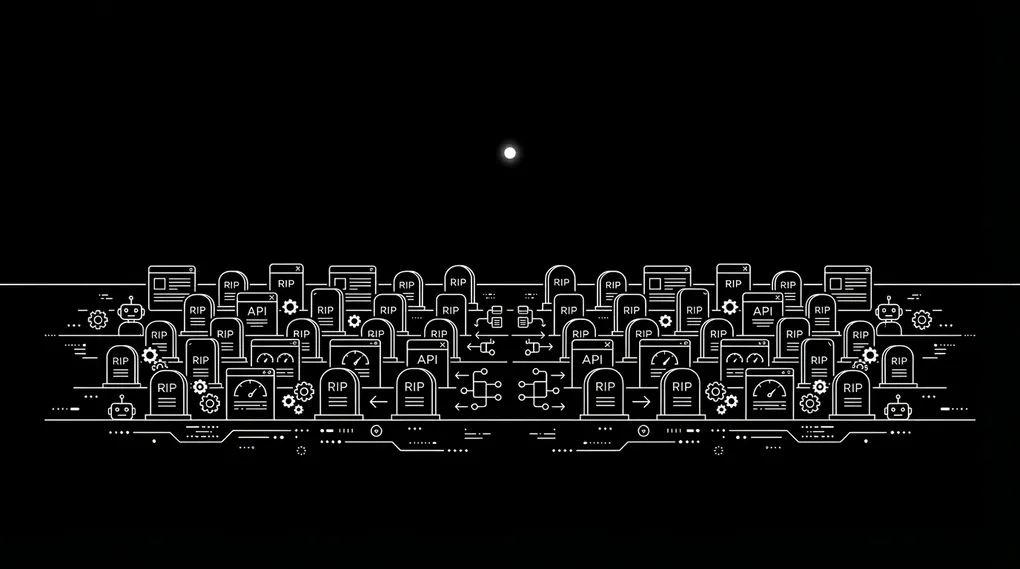

I Have a Graveyard of Automations

I Have a Graveyard of Automations

Apps, dashboards, custom AI agents, n8n workflows. I built them, tested them, refined them, and stopped using them within weeks.

Some days I spend eight hours building an automation, then watch it fail the moment it goes live. Real data behaves nothing like test data.

AI agents are worse. A platform pushes an update, the code format in your settings changes, and if you’re non-technical you can’t find the bug. You lose three days bringing your AI assistant back online, all to keep what you already had.

The Overhype Problem

Scroll LinkedIn and someone is announcing they replaced their $199/mo CRM with an AI agent built in two hours. What they built is a CRM that runs on their laptop, breaks on one bad input, has no auth, no error handling, and no backup plan. That’s a demo.

I’m optimistic about AI. Things are possible today that I don’t understand yet, and I learn something new every week. The overhyping is what bothers me.

A Real Example: George and the Circle Posts

My client George runs a coaching practice. After every weekly call with a private client, he posts the meeting recording, a written recap, action items, and future topics into a Circle space only that client can see. Each post takes twenty to thirty minutes. Across his roster, that’s three hours a week of tedious work right after a draining call. He hated it.

His pain was real. The hype promised he could fix it in two hours.

George opened his OpenClaw agent, Q, and asked it to build the automation. Q laid out a plan and listed the APIs it needed. George generated a Circle API key and pasted it into the chat (mistake one). Then he hit the Zoom side and got stuck finding the right endpoints. Q helped him write a clean SKILL.md with the full SOP, but the workflow never ran end to end.

Eight hours in, he was still not saving the three hours.

I’m not above this either. Last month I tried swapping Gemma 4 in as the model for Q. I fell into a rabbit hole, broke Q, and the agent was offline for two days before I fixed it.

The Right Approach for Non-Technical Founders

Systems before tools. Before you open an AI agent and ask it to build something, write the workflow down by hand. List every input, every output, every tool the work touches, and every decision point. If you can’t describe the process on one page, no agent is going to build it for you.

Then check the boring parts first. The agent is only as good as the data and APIs behind it. Does each tool in the chain have a stable API? Do you have the permissions to connect them? What happens when one step fails? A workflow that touches four tools has four places to break, and the person maintaining it has to understand all four.

If that sounds like a lot of work, it is. That’s why the “built it in two hours” posts are either demos or lies. Real automation is slow, boring, and worth doing only after you’ve run the workflow by hand enough times to know what you’re automating.

Building Is the Easy Part

Maintaining is the job.

The flex is an automation that runs for six months without you touching it, not a screenshot of your first green test run.

If you’re spending more time fixing than building, that’s the job. Nobody posts that part.

Related Reading

- Systems Before Tools: Why Most Agents Fail at AI — the framework that keeps me out of my own graveyard

- What a Real AI Employee Does After 5 Weeks — the full story of George’s agent, Q, and what it actually runs

- I Deployed OpenClaw for a Client. Here’s What Matters. — why the knowledge base matters more than the agent on top

- Why Your AI Tools Give You Generic Answers — the data problem behind every broken automation